AI and the Evolution of Relational Schemas

(Previously in this series: "Power of Schemas," which detailed the theoretical foundations of structured data, and "The Great Data Debate," which introduced schema-on-write vs. schema-on-read.)

AI and the Evolution of Relational Schemas

The argument often surfaces that Artificial Intelligence thrives on unstructured data, framing the "rigidity" of schemas (as discussed in our second post) as a hindrance. However, this perceived rigidity is precisely what ensures data integrity—the accuracy, consistency, and reliability of data. And for AI to produce trustworthy results, integrity is paramount.

Why AI Still Needs a Backbone of Integrity

Key aspects of data integrity, often enforced by well-defined schemas, include:

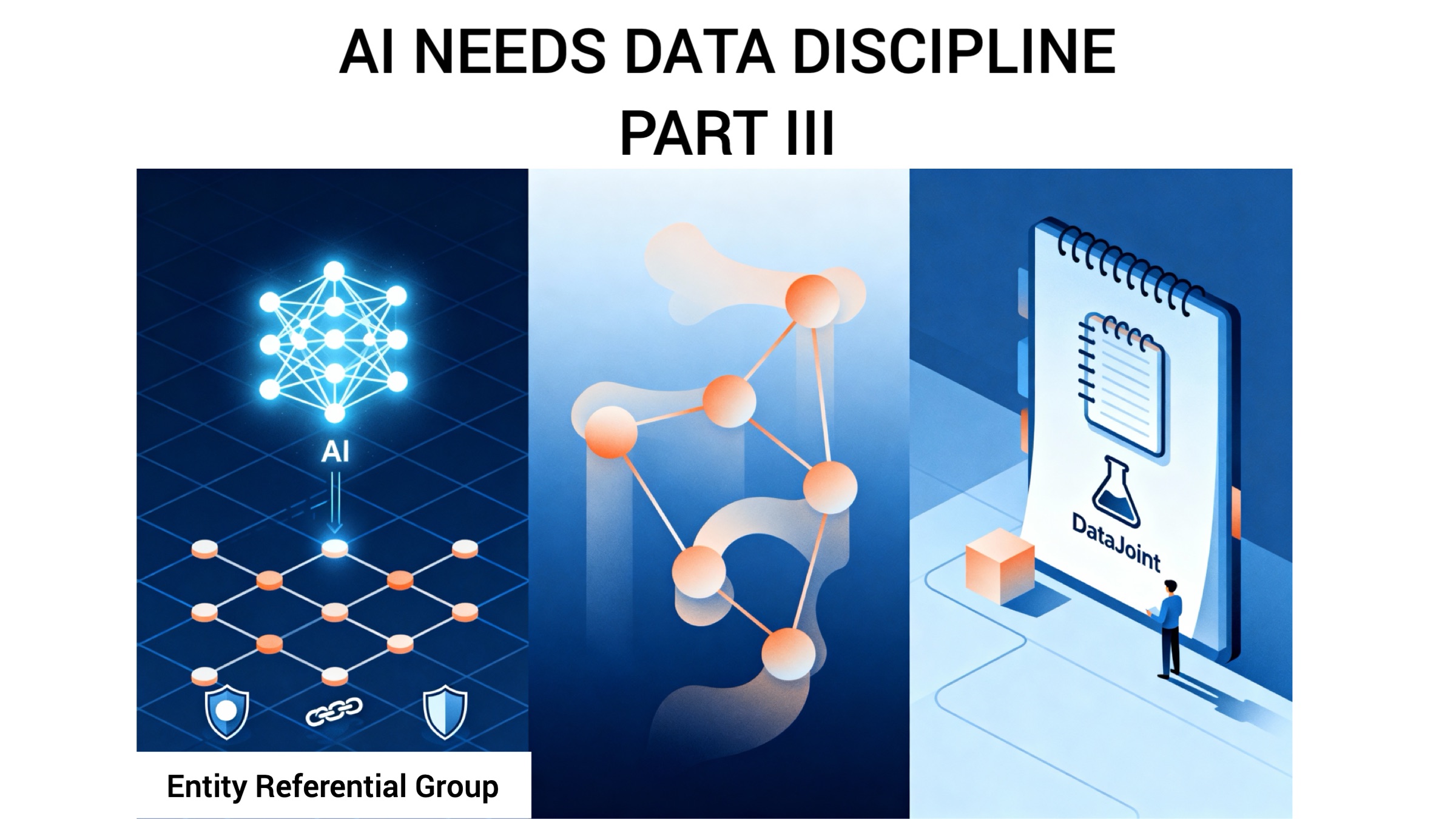

- Entity Integrity: Ensuring each real-world entity is uniquely identified. Think of this as every citizen having a unique ID, preventing confusion.

- Referential Integrity: Guaranteeing that relationships between data remain valid. This ensures, for example, that lab results are correctly linked to the specific patient, preventing critical misattributions.

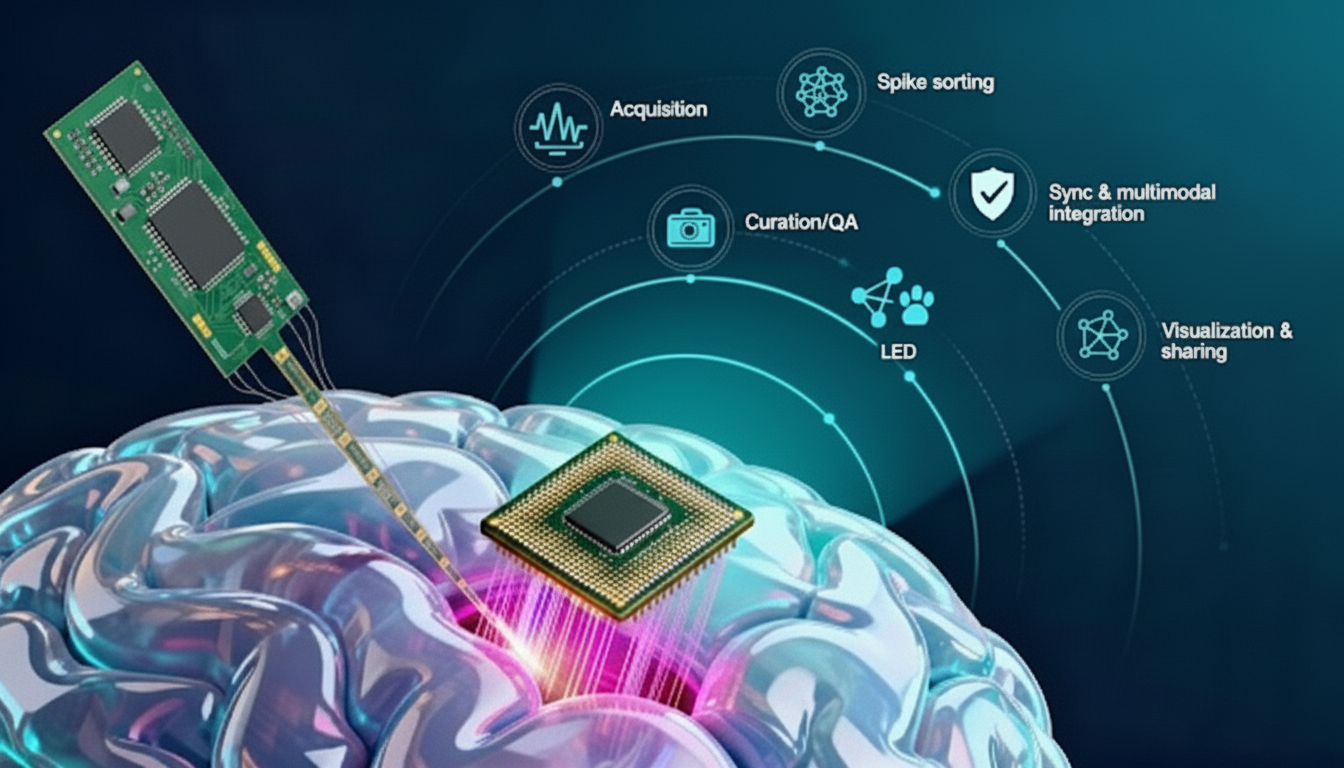

- Group (Compositional) Integrity: Treating entities composed of multiple essential parts as inseparable units. For instance, if an algorithm extracts several signal traces from one recording, group integrity ensures these are managed as a complete set; removing one arbitrarily would invalidate the analysis.

The rise of AI doesn't fundamentally change the tradeoffs between schema-on-write and schema-on-read (explored in our first post). While AI can process unstructured "data soup," an AI working with well-structured data is like a detective with neatly organized evidence logs—connections are clearer, verification is easier, and conclusions are far more reliable. In fact, an AI might even express its understanding of unstructured data by constructing a relational schema, offering a verifiable representation of its inferred findings.

Evolving the Model for Modern Data Challenges

Traditional relational implementations do face challenges with modern data:

- Handling Large Objects: Efficiently storing and querying massive objects like videos or raw instrument outputs can be impractical in classic relational structures.

- Schema Evolution: Modifying schemas in large, live databases can be cumbersome, hindering agility.

- Integrating Computation: Deeply embedding complex computations (often in Python) and managing their dependencies within the data model requires extensions beyond standard relational frameworks.

Addressing these means evolving the relational approach. New models need to support large objects more natively, allow schemas to adapt without sacrificing integrity, and treat computation as an integral part of the data pipeline. AI itself could aid this, potentially inferring relational structures from unstructured data, providing a verifiable hypothesis about its underlying organization.

DataJoint: A Modern Example of Structured, Computable Data Management

The DataJoint framework exemplifies such an evolved, structured approach, especially for scientific AI applications. It refines the relational model by integrating computational dependencies directly into the schema. This treats computations as first-class citizens, allowing entire scientific workflows—from data acquisition and processing to analysis—to be represented as a unified, integrity-checked data pipeline. Imagine a digital lab notebook combined with an automated assistant, where every experiment (computation) is precisely linked to its data inputs and methods, ensuring results are traceable, verifiable, and reproducible.

The Enduring Need for Structure

Ultimately, the choice between structured, unstructured, or hybrid data strategies depends on specific needs. Where rapid ingestion of diverse data is key and some inconsistency is tolerable, schema-on-read holds advantages. However, for systems demanding high data integrity, consistency, and provable relationships—especially when AI is involved in critical decision-making—the mathematical rigor and enforcement capabilities of well-defined schemas remain essential. AI is a powerful analytical tool, but it doesn’t negate the foundational need for structure when trustworthiness and reliability are non-negotiable.

Related posts

When Scientific AI Forgets How It Got There

Neuropixels, Plainly Explained

Insight Entrepreneurship – A New Vision for Science

Updates Delivered *Straight to Your Inbox*

Join the mailing list for industry insights, company news, and product updates delivered monthly.

.svg)